Every builder I know checks the comments.

Not because they trust them, but because they can’t help it. Beneath the noise lives a signal we all chase: proof that what we’re making actually matters to other people. In a world optimized for clicks and curation, the raw pulse of collective opinion has become one of the last places where human meaning leaks through.

We like to imagine ourselves as rational, independent thinkers. Yet an invisible social field shapes most of our decisions-what to buy, what to build, what to believe. Even when we resist consensus, we define ourselves in relation to it. Understanding what people think, and why they think it, isn’t optional for builders-it’s the difference between creating something people actually want and building expensive lessons in humility.

The Comfort and Context of the Crowd

There’s wisdom in crowds, but not because they always converge on truth. Often, they don’t. Truth, especially early truth, tends to start as a contrarian position. But crowds provide something just as vital: context. They tell us what people are noticing, fearing, celebrating, misunderstanding. They give us the social coordinates to make sense of our own place in the world.

Psychologists Baumeister and Leary once argued that belongingness is not optional-it’s as fundamental as food and shelter. That drive manifests everywhere: the need to check reviews before buying, the urge to see how others reacted to a film we just watched, the compulsion to refresh the feed after posting something we care about.

Being part of a group-even digitally-creates a sense of safety and meaning that’s hard to replicate in isolation. This is not mere conformity; it’s contextual navigation. Understanding the group helps us understand ourselves.

The visible pulse of crowd engagement: Like counts, shares, and comments become the social proof that drives the fundamental human need for belonging. These metrics transform abstract social validation into tangible, quantified feedback that fuels our participation in digital crowds.

The visible pulse of crowd engagement: Like counts, shares, and comments become the social proof that drives the fundamental human need for belonging. These metrics transform abstract social validation into tangible, quantified feedback that fuels our participation in digital crowds.

The Two Broken Mirrors of Modern Information

Today’s information ecosystem distorts this instinct in two opposite ways.

On one side, curated expertise filters the world into neat conclusions-clarity without nuance. On the other, personalized algorithms mirror our past clicks back to us, promising relevance while quietly narrowing perspective. Scroll through any trending page and you’ll see the split: polished certainty on one side, algorithmic déjà vu on the other. Both claim to know what matters. Neither truly listens.

What’s missing is the raw human layer-the messy, unfiltered dialogue that happens in comment sections, forums, Discord servers, and threads. These spaces often turn chaotic, even hostile, but they contain the kind of pattern recognition that emerges when thousands of people react to the same thing-something curated feeds can’t replicate.

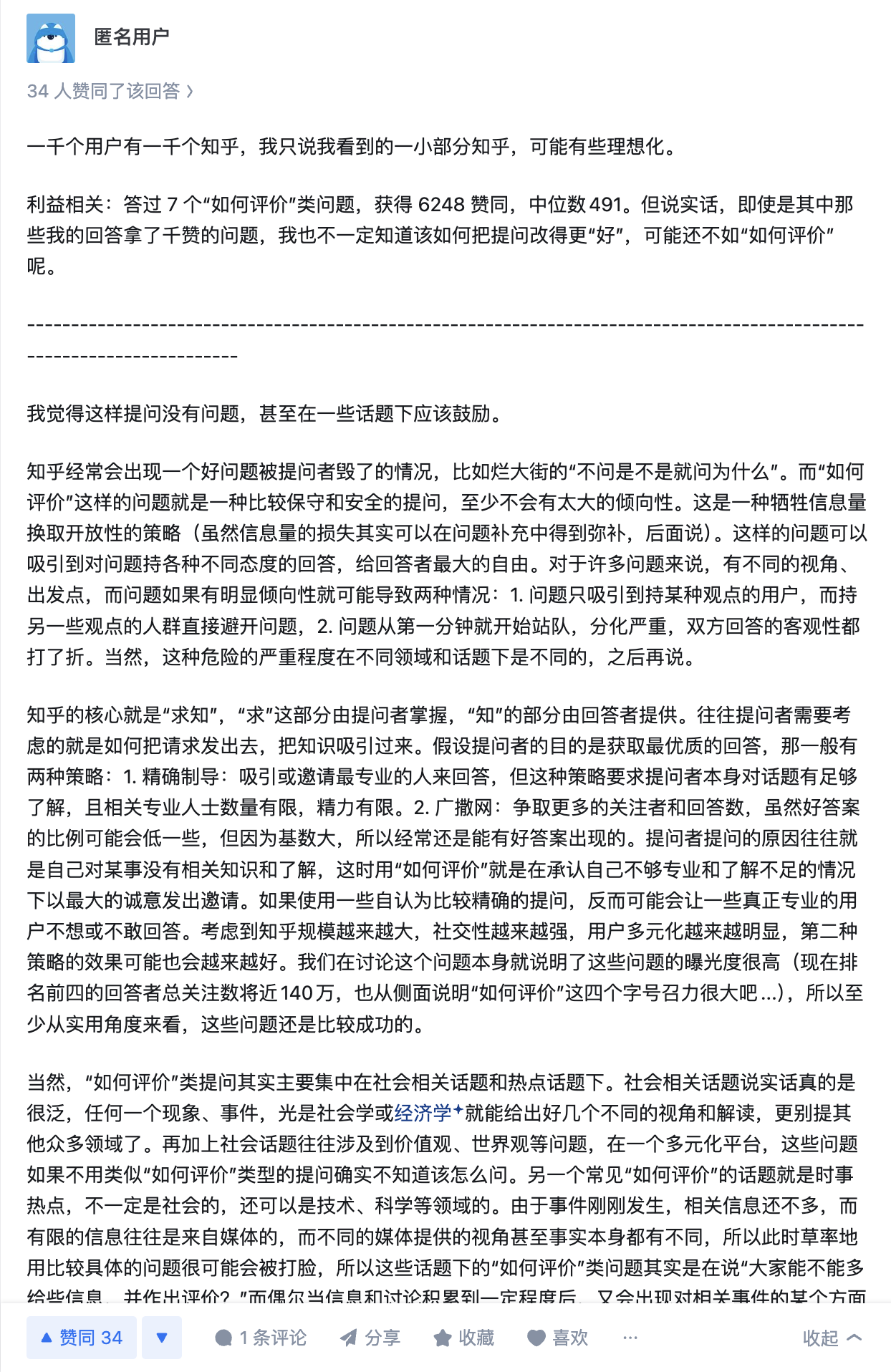

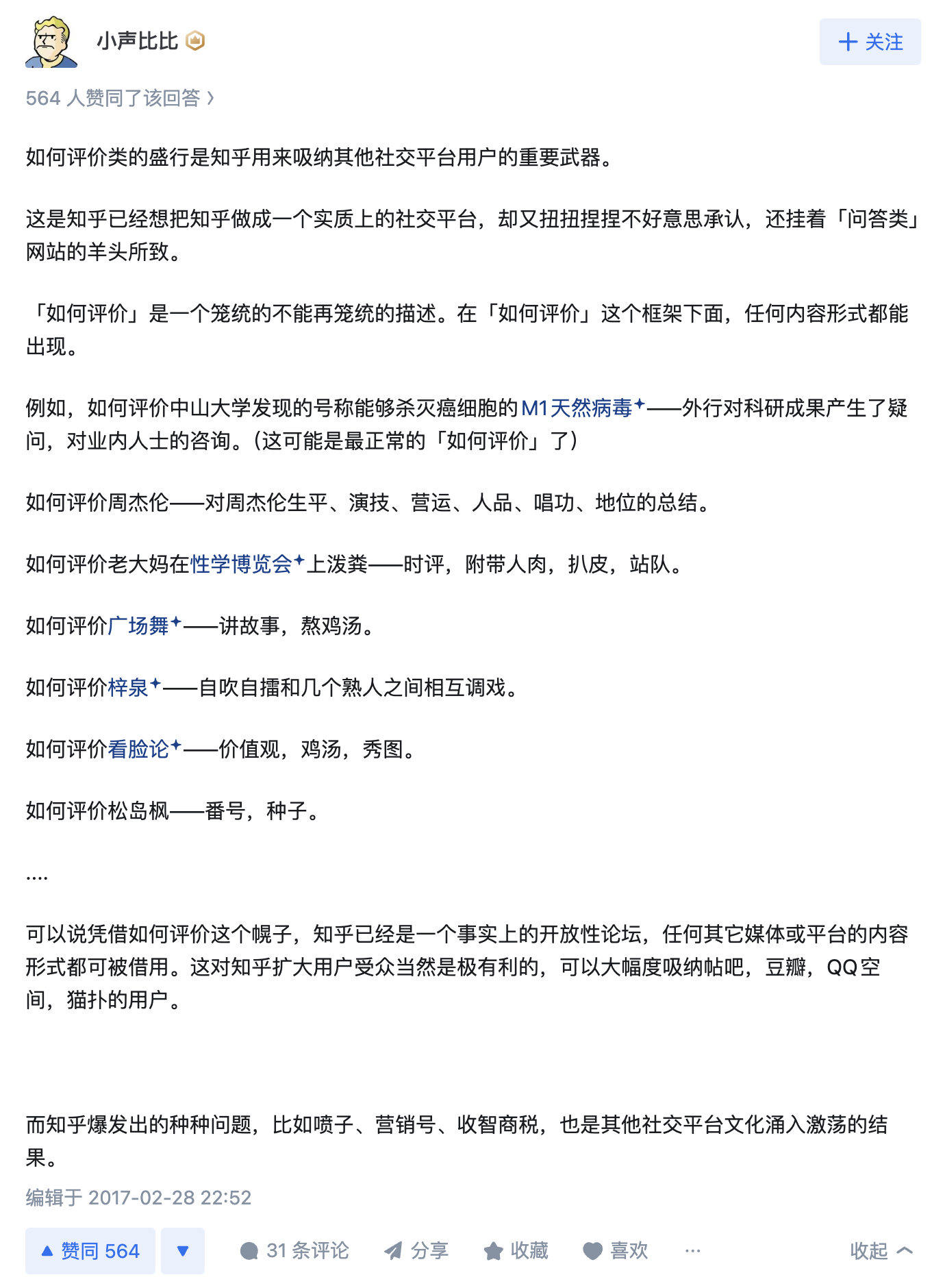

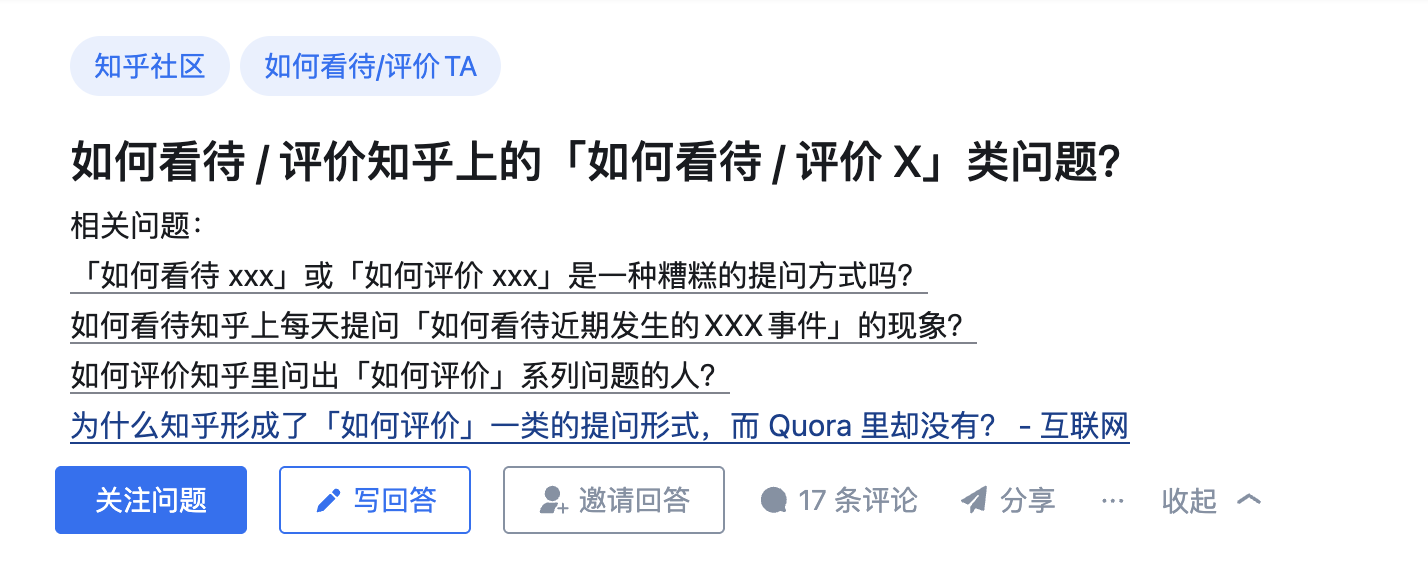

Zhihu’s “How to evaluate” format demonstrates how structured questioning shapes collective opinion formation. Note how the format itself becomes a lens that influences what aspects of a topic the crowd focuses on.

Zhihu’s “How to evaluate” format demonstrates how structured questioning shapes collective opinion formation. Note how the format itself becomes a lens that influences what aspects of a topic the crowd focuses on.

As Palantir’s Alex Karp has said, “Understanding the collective judgment of imperfect people in uncertain domains is the real frontier of intelligence.” He was speaking about intelligence analysis, but the principle applies perfectly to social media-real intelligence, human or artificial, emerges not from isolation but from aggregation with awareness: knowing when to listen, when to weigh, and when to dissent.

Listening at Scale

This is the paradox every product builder faces: we need crowd insight, but most tools give us crowd metrics. We get volume without meaning, sentiment without story. What if we could change that?

This is where Crowdlistening enters the picture-a platform designed to systematically listen to the internet the way great founders listen to their customers, not by counting mentions, but by mapping meaning.

Traditional social analytics measure volume, sentiment, reach. But these metrics flatten emotion into numbers. They strip away what makes human expression meaningful: tone, humor, frustration, context. Crowdlisten instead seeks to surface meaning clusters-patterns of how people express emotion, share experience, and assign value.

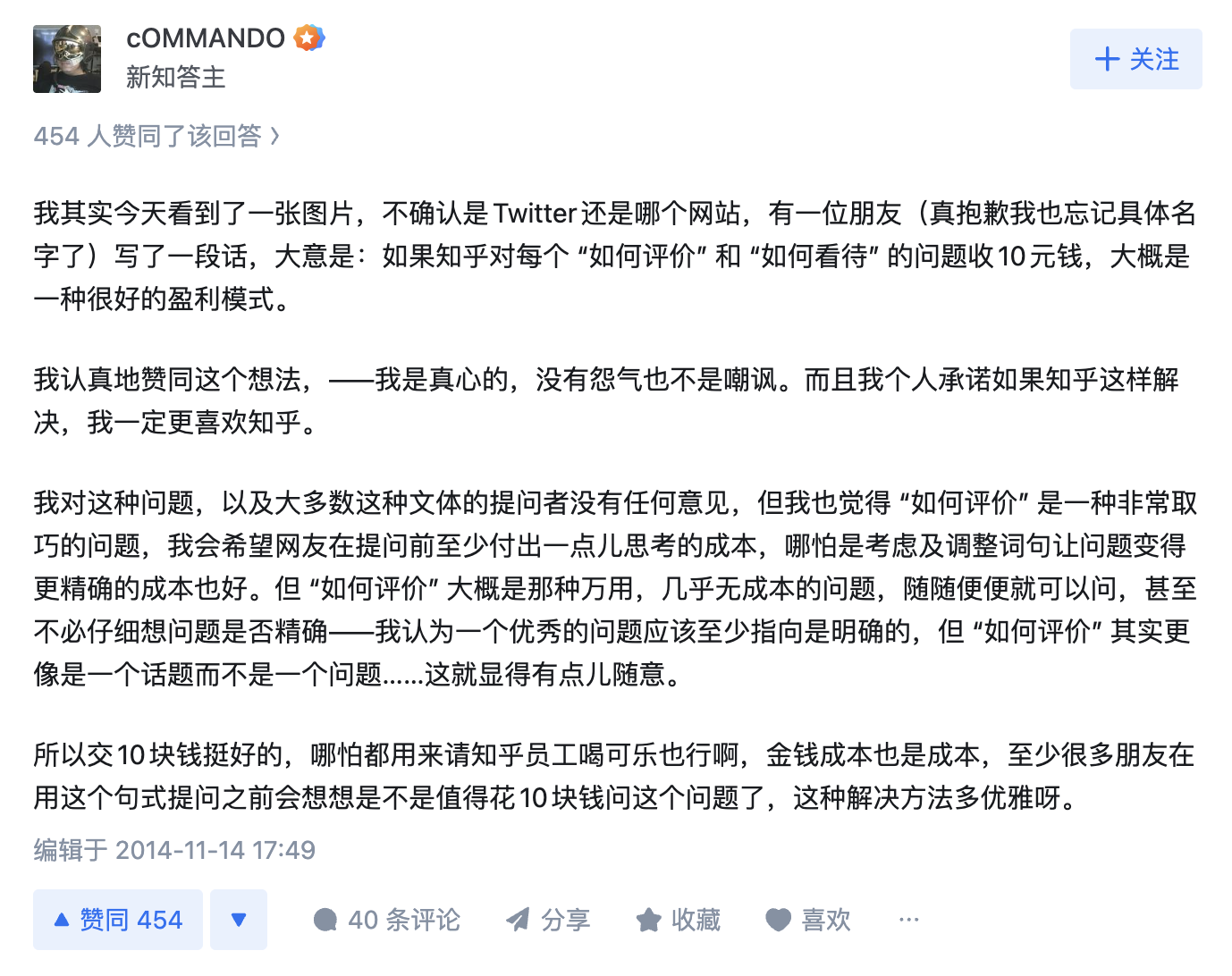

Chinese social media reveals how platform mechanics shape crowd behavior. This Zhihu discussion explores how social networks are inherently about human-to-human interaction, not just Q&A formats-highlighting the social substrate beneath all crowd intelligence.

Chinese social media reveals how platform mechanics shape crowd behavior. This Zhihu discussion explores how social networks are inherently about human-to-human interaction, not just Q&A formats-highlighting the social substrate beneath all crowd intelligence.

Imagine thousands of YouTube comments on a new AI tool. A typical dashboard might tell you it’s “70% positive.” Crowdlistening reveals something deeper:

that creators praise its creative control but distrust its data usage,

that early adopters joke about “prompt fatigue,”

that a sub-community is already remixing it into new workflows.

These are not metrics. They’re narratives. And for product builders, narratives are where insight lives.

From Data to Dialogue

Listening to the crowd doesn’t mean surrendering to it. It means learning from its distributed intelligence. The crowd may not always be right, but it’s always revealing.

Crowds show us contradictions between what people say they want and what they actually do. They highlight emotional undercurrents that surveys miss-like frustration masked as humor, or excitement mixed with fear. When interpreted thoughtfully, these signals help teams design with empathy rather than ego.

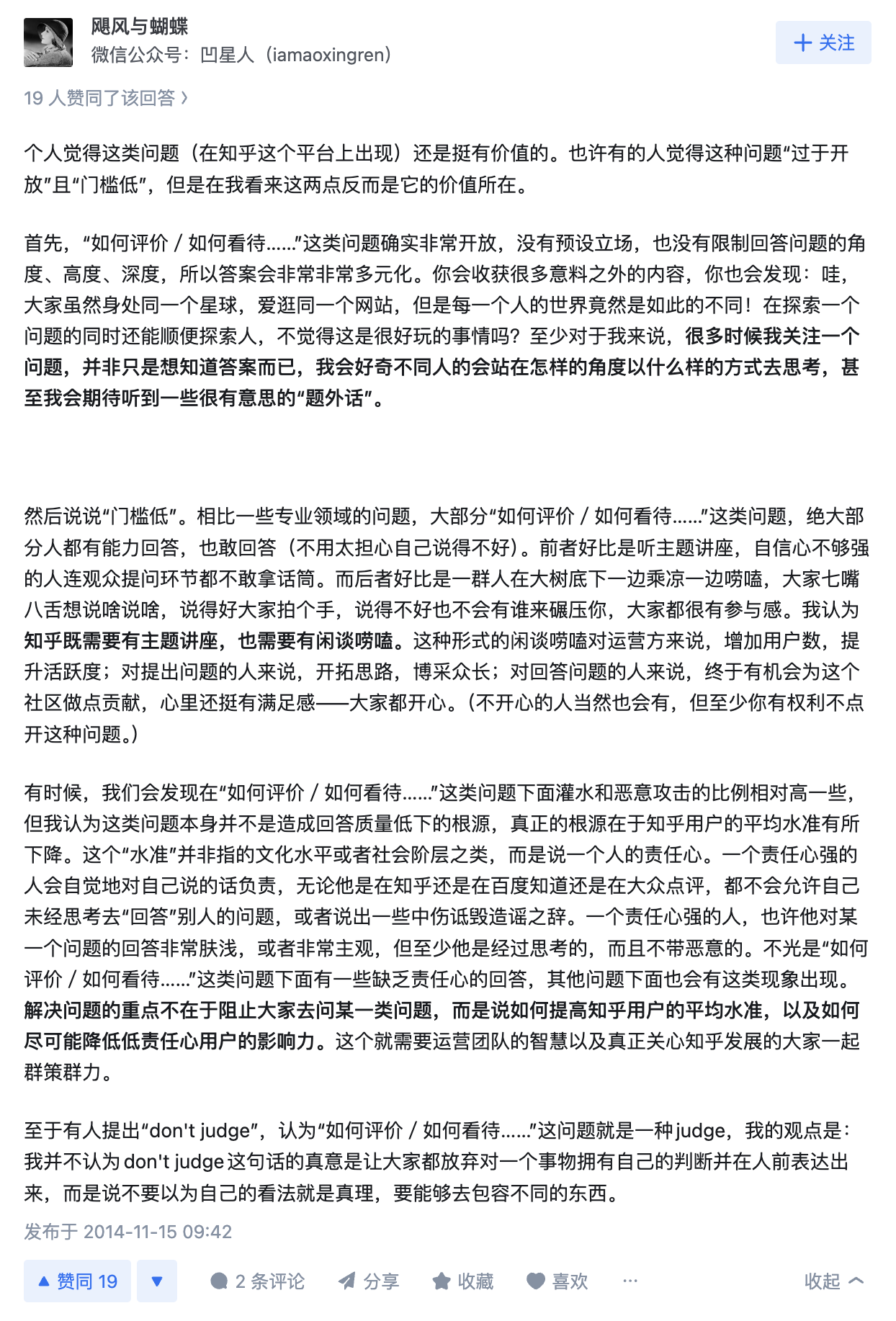

The humility of crowd discourse: “Because our knowledge is limited, we can’t make accurate judgments.” This admission of epistemic humility creates space for collective learning rather than individual certainty.

The humility of crowd discourse: “Because our knowledge is limited, we can’t make accurate judgments.” This admission of epistemic humility creates space for collective learning rather than individual certainty.

Platform design influences discourse quality. When questions allow diverse perspectives, the hope is that through viewing answers, people find intellectual peace rather than taking sides-a design philosophy for constructive crowd dynamics.

Platform design influences discourse quality. When questions allow diverse perspectives, the hope is that through viewing answers, people find intellectual peace rather than taking sides-a design philosophy for constructive crowd dynamics.

In this sense, crowd intelligence is a mirror, not a manual. It reflects the messy, contradictory human condition that every good product ultimately serves. The best builders don’t seek consensus; they seek comprehension. They use the crowd’s collective reflection as raw input for intuition and design judgment.

The Moral Dimension of Listening

Karp often argues that the purpose of technology isn’t efficiency but preservation of human agency. For social listening, I think that means being honest about what you’re doing: you’re extracting signal from people who didn’t know they were being analyzed. That comes with some obligation to do it carefully, to trace findings back to actual voices, and to not flatten the thing you’re studying in the process of studying it.

The practical value of crowd wisdom: For unknown domains, crowds help find entry points. For familiar domains, they reveal different problem-solving approaches. The layered relationship between domains mirrors how crowd intelligence operates at different levels of expertise.

The practical value of crowd wisdom: For unknown domains, crowds help find entry points. For familiar domains, they reveal different problem-solving approaches. The layered relationship between domains mirrors how crowd intelligence operates at different levels of expertise.

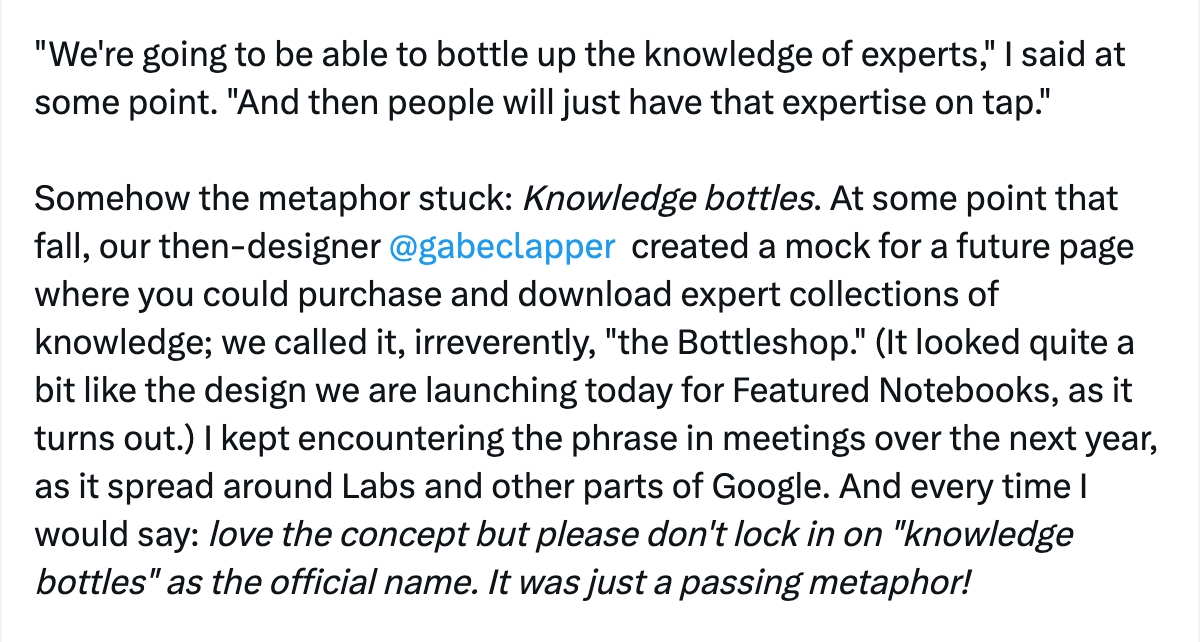

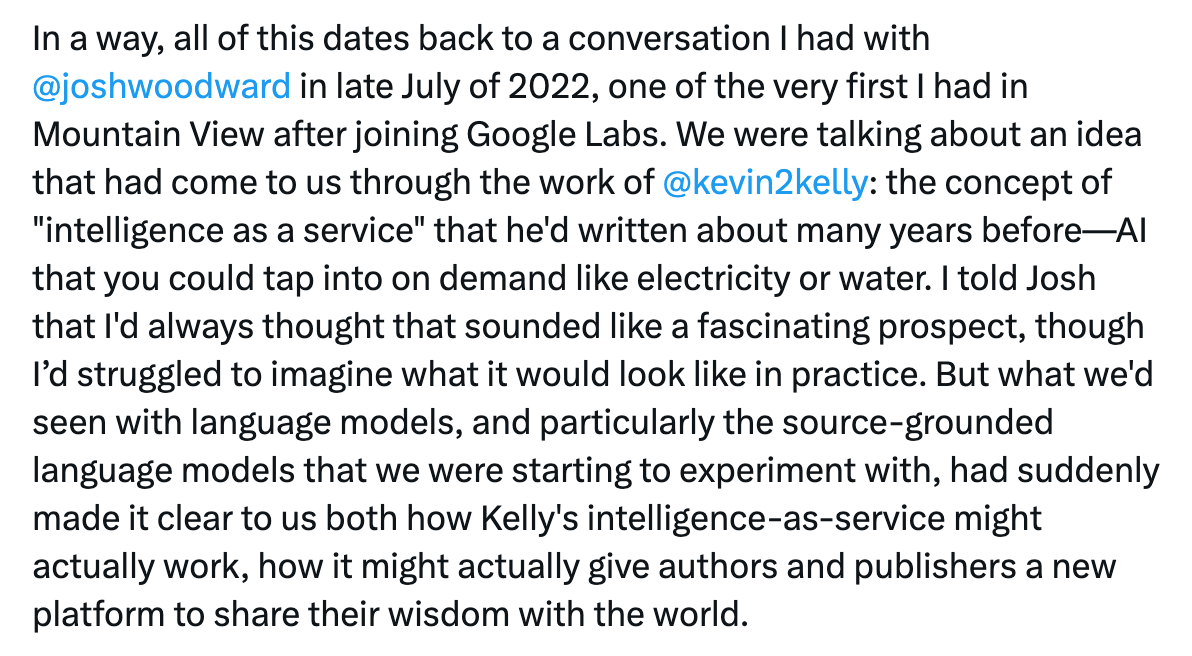

Google’s “knowledge bottles” concept: The vision of packaging expert knowledge for on-demand access reflects the tension between curated expertise and crowd-sourced intelligence-both approaches to democratizing knowledge at scale.

Google’s “knowledge bottles” concept: The vision of packaging expert knowledge for on-demand access reflects the tension between curated expertise and crowd-sourced intelligence-both approaches to democratizing knowledge at scale.

Listening as Design

The point isn’t to glorify crowds or discredit experts. It’s to remember that the raw signal is there - in comments, threads, forums, all the places people talk when they’re not being surveyed. Most tools flatten it. The interesting design challenge is figuring out how to preserve what makes it worth reading.

The meta-discussion of crowd platforms: A user proposes that Zhihu could monetize by charging for “How to evaluate” questions, recognizing the inherent value in structured crowd inquiry-demonstrating how the crowd can optimize its own intelligence-gathering mechanisms.

The meta-discussion of crowd platforms: A user proposes that Zhihu could monetize by charging for “How to evaluate” questions, recognizing the inherent value in structured crowd inquiry-demonstrating how the crowd can optimize its own intelligence-gathering mechanisms.

The anatomy of crowd discourse: This meta-analysis of Zhihu’s question patterns shows how format shapes thinking. The comparison to Quora reveals cultural differences in how platforms structure collective intelligence-each format creates different kinds of crowd wisdom.

The anatomy of crowd discourse: This meta-analysis of Zhihu’s question patterns shows how format shapes thinking. The comparison to Quora reveals cultural differences in how platforms structure collective intelligence-each format creates different kinds of crowd wisdom.

Crowd Intelligence in Practice

The examples from Chinese social media platforms like Zhihu demonstrate how different cultural contexts shape crowd behavior and intelligence. The structured format of “How to evaluate X” questions creates a framework for systematic collective analysis, while the meta-discussions about platform design show crowds reflecting on their own intelligence-gathering processes.

Understanding platform incentives: This detailed analysis of user-generated content dynamics shows how platform economics influence the quality and nature of crowd contributions-a critical factor in designing systems that harness collective intelligence effectively.

Understanding platform incentives: This detailed analysis of user-generated content dynamics shows how platform economics influence the quality and nature of crowd contributions-a critical factor in designing systems that harness collective intelligence effectively.

The essence of crowd evaluation distilled: “Actually, crowd evaluation.” Sometimes the most profound insights come in the simplest forms-this minimalist comment captures the fundamental nature of what we’re exploring.

The essence of crowd evaluation distilled: “Actually, crowd evaluation.” Sometimes the most profound insights come in the simplest forms-this minimalist comment captures the fundamental nature of what we’re exploring.

From concept to implementation: The journey from Josh Woodward’s conversations about “intelligence as a service” to practical applications demonstrates how visionary ideas about crowd and artificial intelligence converge into real-world systems that can “bottle up the knowledge of experts.”

From concept to implementation: The journey from Josh Woodward’s conversations about “intelligence as a service” to practical applications demonstrates how visionary ideas about crowd and artificial intelligence converge into real-world systems that can “bottle up the knowledge of experts.”

References

Alex Karp, Palantir CEO Letters (2023-2024): on collective judgment and moral responsibility in machine intelligence.

Baumeister, R. F., & Leary, M. R. (1995). “The need to belong: Desire for interpersonal attachments as a fundamental human motivation.” Psychological Bulletin, 117(3), 497-529.

Surowiecki, J. (2004). The Wisdom of Crowds. Anchor Books.