Thesis role: System design — how CrowdListen converts ambiguity into agent-ready execution context.

Why this product exists: ambiguity breaks execution

In the agent economy, ambiguity compounds cost quickly. Teams can ingest massive volumes of comments, videos, and feedback and still miss the decision signal. Dashboards may look complete, but at prioritization time the core question remains: which pain points are durable, which are transient, and what should be built now.

This is where execution quality breaks down. If ambiguity is not reduced early, it propagates through planning, handoff, and implementation. Agents can execute quickly, but they execute what they are given. When context is fuzzy, speed amplifies misalignment. CrowdListen is designed to reduce that ambiguity before work is delegated, so the transition from signal to decision to execution remains grounded in evidence.

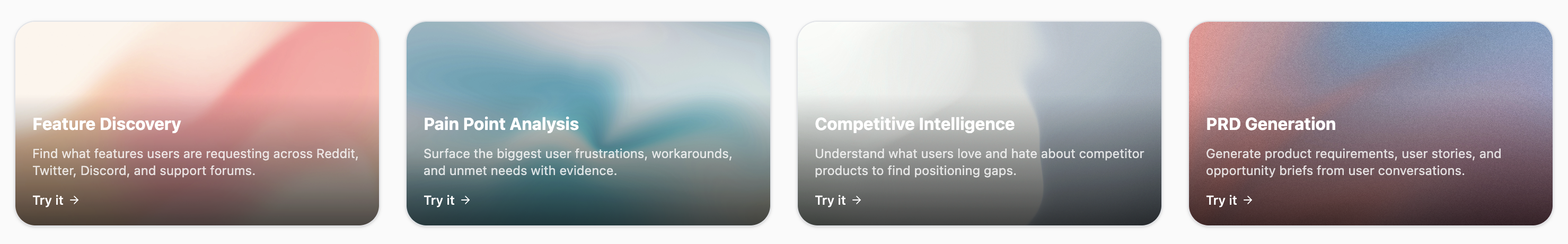

Product Suite Overview

CrowdListen is built around a specific execution failure we kept seeing: feedback is fragmented across channels, synthesis is manual, and intent gets lost between research, planning, and delivery. Most product organizations can collect signal but cannot preserve user context through implementation. That is where quality drops: requirements become abstract, handoffs become lossy, and coding agents produce work that is technically correct but strategically misaligned.

We designed the product as one connected operating flow instead of disconnected tools. Feed captures and structures raw audience signal, Workspace turns that signal into evidence-backed product direction, and Tasks routes scoped work to agents with enough context to execute reliably. The system is not trying to generate more artifacts; it is trying to preserve decision quality from first observation to shipped output.

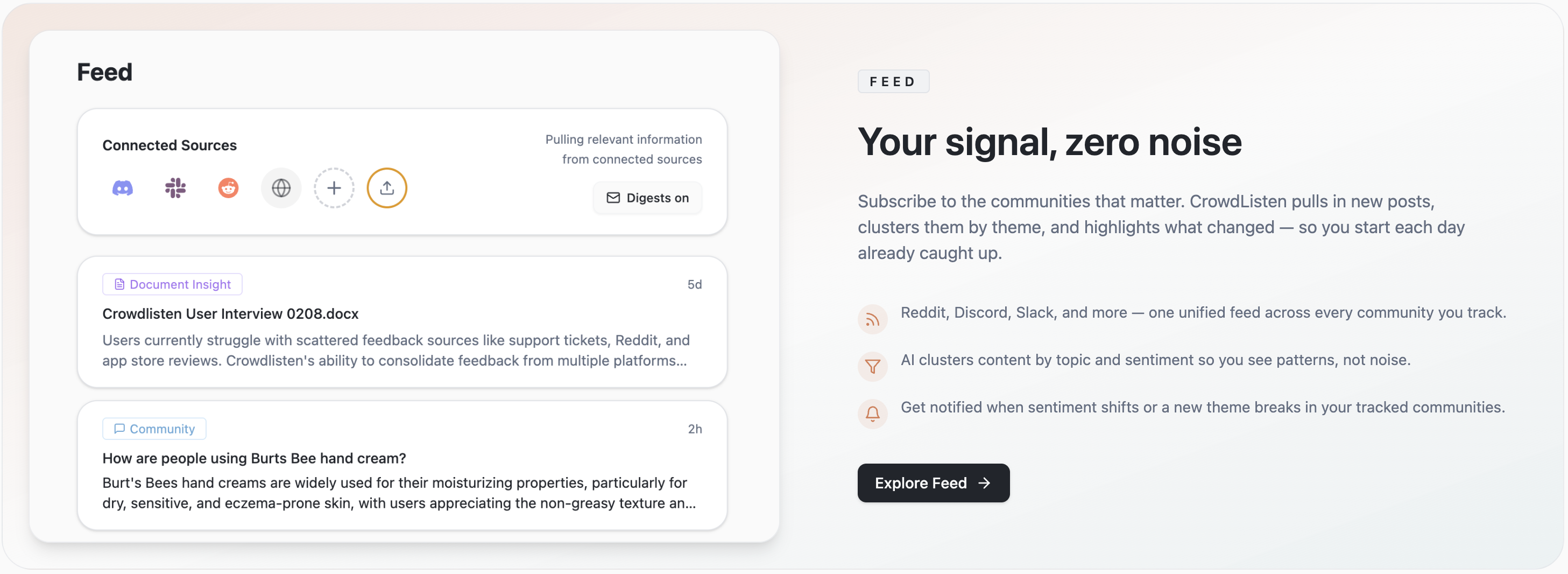

Feed

Feed consolidates cross-channel conversation into a single signal layer, including pain points, feature requests, objections, and recurring workarounds. Instead of relying on mentions or keyword counts, it clusters meaning and tracks persistence over time, so teams can distinguish temporary noise from durable demand. The output is a structured view of what users are actually asking for, in their own language, with enough specificity to drive product decisions.

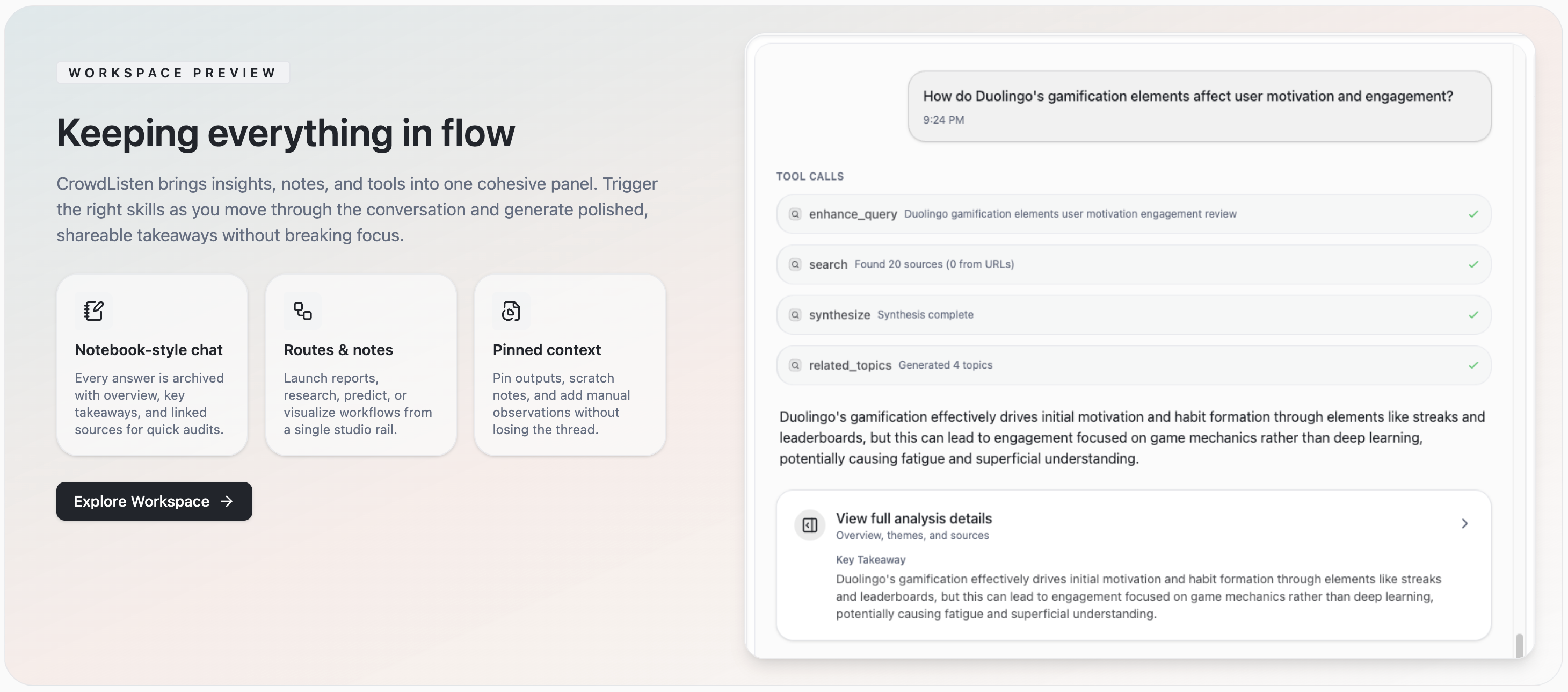

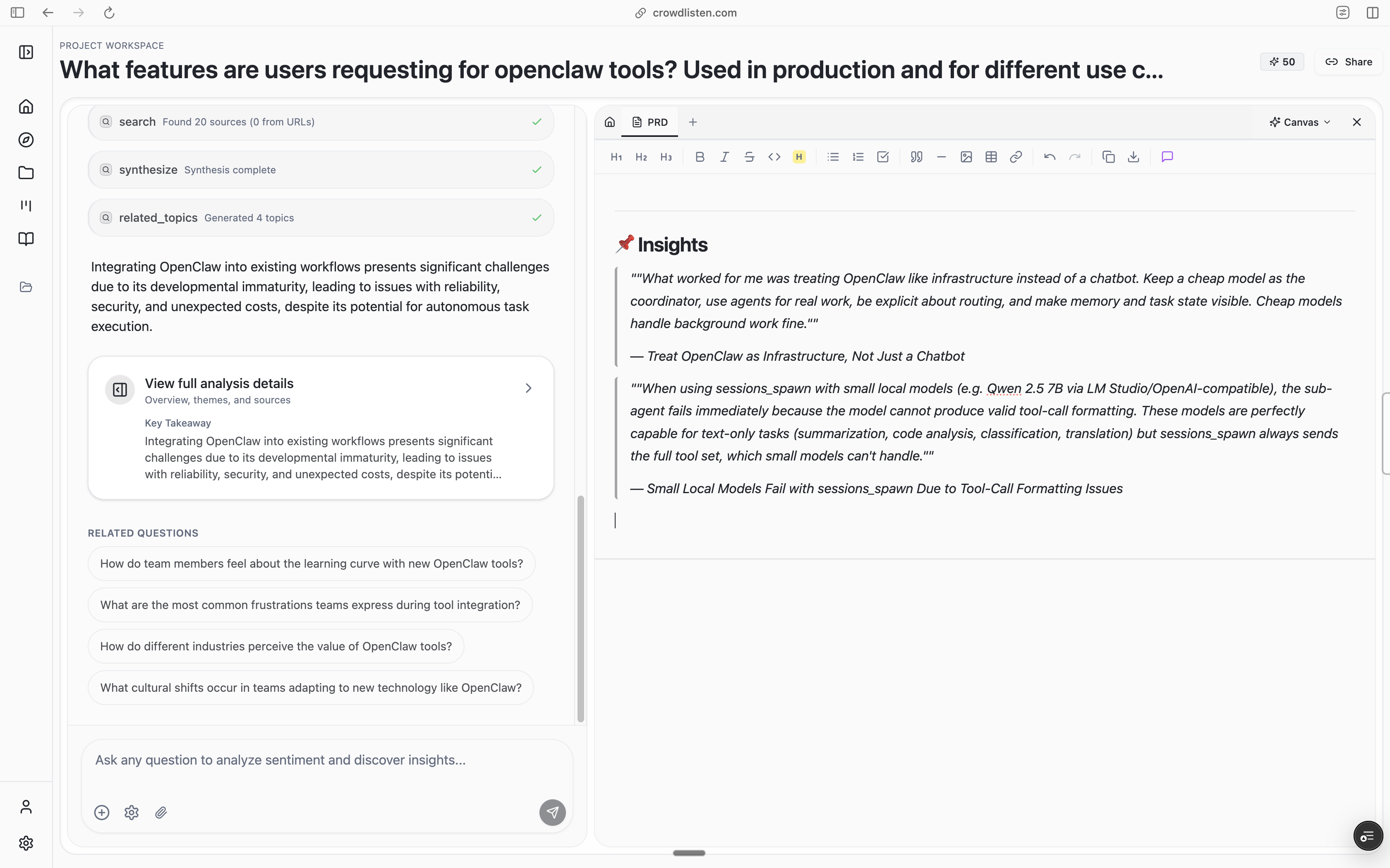

Workspace

Workspace is where teams turn raw signal into decisions. It supports conversational exploration of the problem space, helps validate hypotheses against evidence, and produces richer product artifacts than traditional summary docs. The emphasis is not on writing longer PRDs; it is on preserving rationale, constraints, and user context so that every decision remains traceable back to real audience behavior.

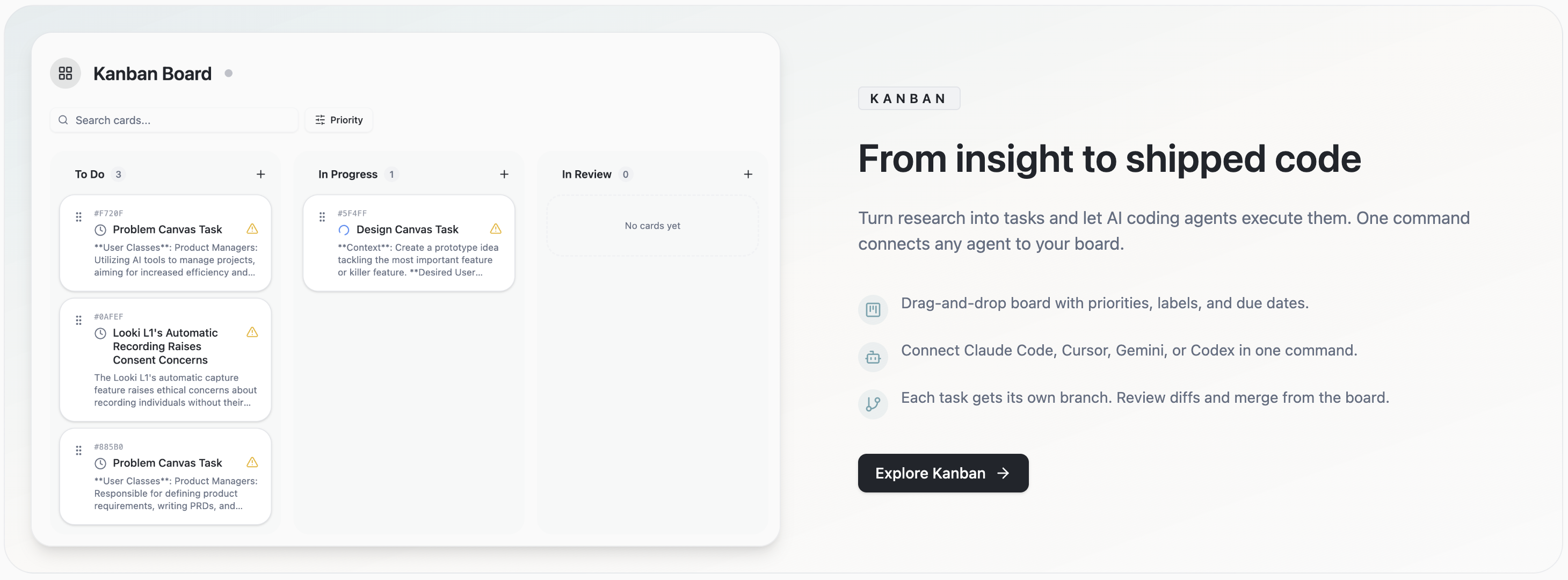

Tasks

Tasks closes the loop between product intent and implementation. It decomposes specs into executable tasks and routes them to coding agents with project context intact, which reduces drift between what the team intended and what actually gets built. This is the layer that turns CrowdListen from an analysis product into an execution system: user signal becomes prioritized work, and prioritized work becomes shipped outcomes with accountability.

Why this structure matters

The thesis is simple: in an agent-driven product economy, the bottleneck is no longer writing code, it is preserving intent. Teams that can carry user context through every handoff will iterate faster and ship better decisions, while teams that lose context will scale misalignment. CrowdListen is designed to be the PM layer for agents by translating audience insight into agent-ready specifications that remain grounded in evidence.

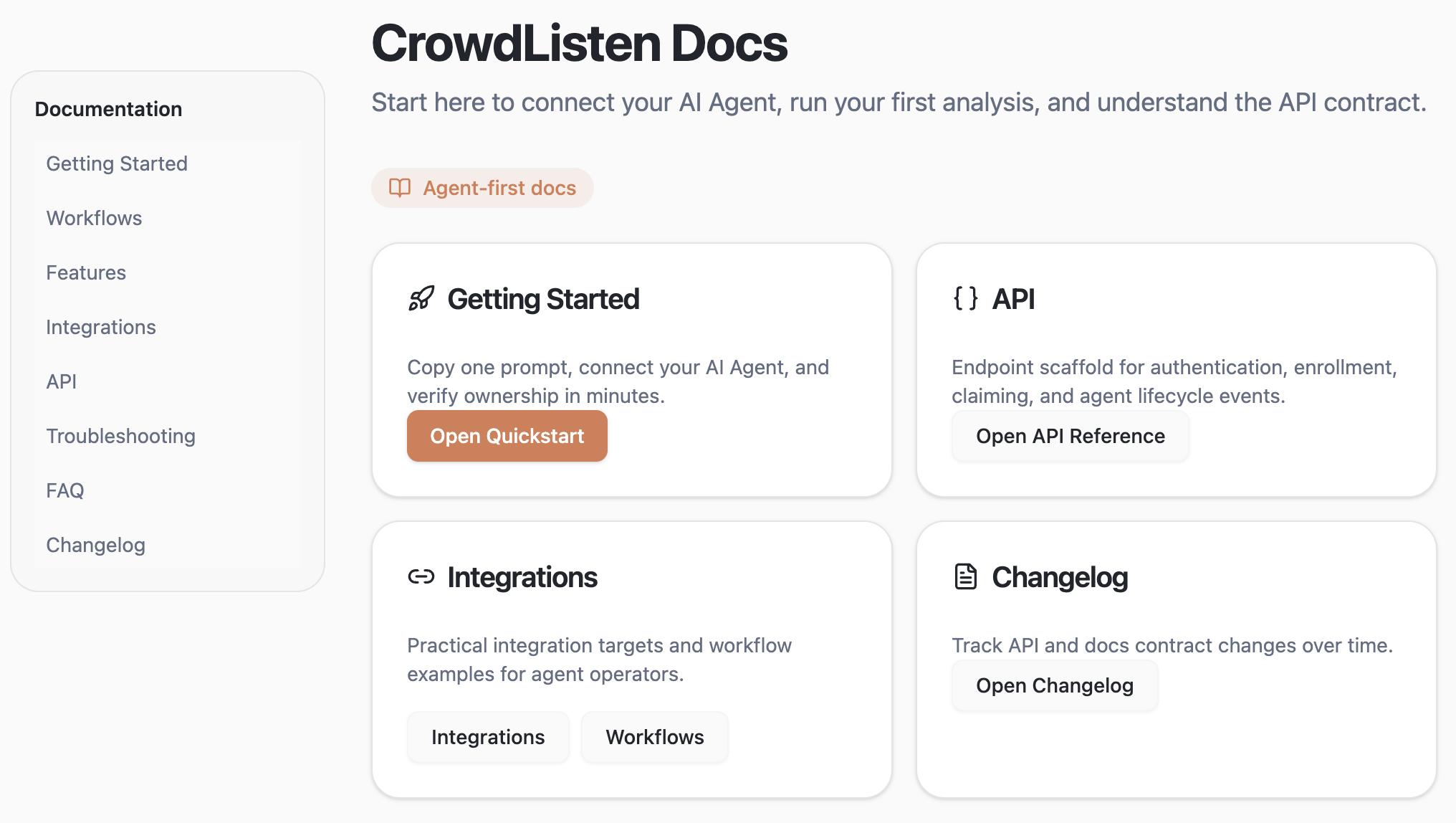

Tooling for agents: MCPs and skills that connect directly to CrowdListen

A large part of our execution runs through an agent integration layer. In practice, we treat CrowdListen as tooling for agents rather than a dashboard humans manually operate. We expose MCPs and agent skills so agents can directly access features, pull evidence, and convert findings into work artifacts.

This shows up in two concrete examples.

1) Product management for agents (delegating ambiguity)

The first pattern is delegating ambiguity to agents while keeping intent intact. Agents ingest signals across channels, connect dots between recurring pain points and feature requests, and surface structured opportunities that can be acted on immediately. Instead of handing agents vague summaries, we route evidence-backed context and constraints so they can turn fragmented conversation into actionable feature proposals.

A meaningful share of this workflow now runs through our agent integration layer: source ingestion, synthesis, and conversion into agent-ready specs/tasks. The objective is not more reporting; it is reducing ambiguity between signal, decision, and execution.

2) Actionable insights for agents, with agents

The second pattern is the insight loop itself: turning broad social data into detailed, operational insight that agents can directly use. This is the practical form of the insight paradox. Teams need scale and depth at the same time, but most tools force a tradeoff between high-volume aggregation and high-fidelity interpretation.

CrowdListen is designed to close that gap by combining large-scale signal capture with structured synthesis that preserves nuance. In practice, this means agents can move from fragmented discussion to usable decisions with less manual translation and less context loss.

Early validation supports this direction. In enterprise conversations, including teams such as L’Oréal, we repeatedly saw the same outcome: when synthesis is structured and context is preserved, teams reduce analysis overhead, respond to shifts earlier, and make faster product calls with higher confidence. The key benefit is not just speed; it is converting audience evidence into better execution decisions without losing fidelity across the workflow.