Over the past few months, I have written about Crowdlisten from several angles: as a distribution partner for vibe-coded products, as an insight infrastructure for AI agents, and as a way to preserve human signal in an age of synthetic content. Each framing captured something real. But after working with early users and watching how they actually use the product, a clearer picture has emerged. Crowdlisten is not just an insights tool. It is a feedback layer.

Products Are Conversations, Not One-Time PRDs

The mental model most builders carry is that a product launches, gets feedback, and then iterates. The reality is messier. Feedback does not arrive in a clean batch after launch. It leaks out continuously—in comments on adjacent products, in questions people ask about similar tools, in the language they use to describe problems they did not know had names. The best builders I have worked with treat this ambient signal as their primary input, not post-launch surveys or NPS scores. That is why the feedback loop has to live alongside PRDs and agile planning, not after them.

The problem is that this signal is scattered, unstructured, and ephemeral. A comment on a TikTok video about a competitor disappears into an algorithmic feed within hours. A Reddit thread comparing tools gets buried under new posts. A recurring question in a Discord server never makes it into the product roadmap because nobody is systematically watching.

Crowdlisten’s job is to make this signal legible and actionable, in real time.

What We Have Learned

Working with the first cohort of builders, three patterns have become clear.

Framing matters more than features. The same product, described differently, produces wildly different engagement. One builder’s tool was ignored when positioned as “an AI writing assistant” but gained traction when a commenter described it as “the thing that finally made me stop staring at blank pages.” That reframe did not come from the builder. It came from the crowd. Crowdlisten’s job is to surface these reframes before they disappear.

Objections are more valuable than praise. Positive comments feel good but teach little. The comments that drive real product iteration are the ones that say “I would use this if…” or “This almost works but…” These conditional statements contain the exact specification for what to build next. We now weight objection clusters higher than sentiment scores in our analysis.

Speed of learning determines speed of growth. The builders who reach their first thousand users fastest are not the ones who produce the most content or spend the most on ads. They are the ones who close the loop between audience reaction and product change most quickly. A builder who ships a feature mentioned in comments within forty-eight hours creates a narrative of responsiveness that compounds. The audience feels heard, shares more, and becomes invested in the product’s evolution.

From Insights to a Feedback Layer for PMs

These observations have shaped how Crowdlisten is evolving. The initial product was oriented around content creation: take crowd insights, produce videos, distribute them. That remains valuable, but it is only half the loop. The other half—feeding audience response back into product decisions—is where the compounding value lives. This is the same distribution-product loop I describe in Building Has Become Easier — How Do We Fix Distribution?.

The feedback layer works in three stages.

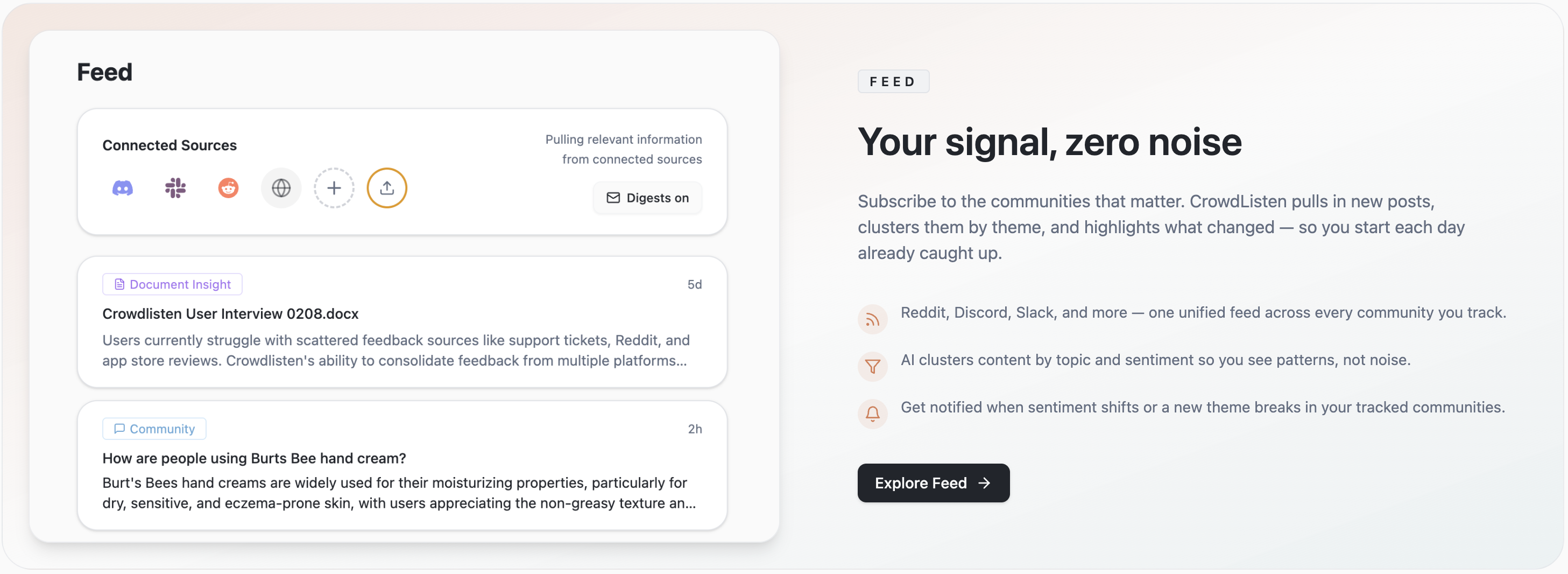

Listen. Crowdlisten continuously monitors conversations across platforms where a builder’s audience and adjacent audiences discuss relevant tools, problems, and workflows. This is not keyword monitoring. It is semantic clustering: understanding what people mean, not just what they say.

Interpret. Raw signal is noisy. Crowdlisten distills it into structured themes: what use cases are emerging, what objections recur, what language resonates, and how perceptions shift over time. These themes are updated continuously, not in weekly reports.

Connect. The interpreted signal feeds directly into two outputs. First, content: new videos, hooks, and framings grounded in what is actually resonating. Second, product insight: specific, evidence-backed suggestions for what to build, change, or stop doing, based on what the audience is telling us through their behavior.

The Compounding Effect

Each cycle through this loop makes the next cycle more effective. As Crowdlisten processes more conversations, the semantic models become more nuanced. As more builders use the platform, cross-category patterns emerge: which objections are universal, which framings transfer across domains, which audience segments adopt new tools in predictable sequences.

This is not a moat built on data volume. It is a moat built on interpretive depth. Anyone can scrape comments. The value is in knowing which comments matter, why they matter, and what to do about them.

What This Means for the Thesis

When I started Crowdlisten, the thesis was about crowd intelligence: understanding what people think at scale. That thesis still holds, but it has sharpened. The insight is not just in understanding what people think—it is in connecting that understanding to action fast enough that the feedback becomes a competitive advantage.

In a world where building is cheap and distribution is hard, the builders who win are the ones who learn fastest. Crowdlisten is the infrastructure that makes that learning possible.

The long-term vision has not changed: Crowdlisten becomes the intelligence layer between products and their audiences. What has changed is my understanding of what that layer actually does. It does not just observe. It closes loops. And closed loops compound.