Is it creative to screenshot someone else’s video and caption it with other people’s comments? This seemingly simple question hits every creator making rent from content: if AI can remix, analyze, and generate at scale, what’s left that’s genuinely yours?

Through building AI content tools, I’ve discovered the real opportunity isn’t AI replacing creators—it’s AI helping creators understand the massive amounts of content data around them to find genuinely fresh angles. Think of it as having a research team that can analyze millions of posts, comments, and engagement patterns in seconds, then surface the insights that lead to truly original work.

TikTok's content creation interface: Where creators combine video, audio, and engagement elements to build viral content

Note (Nov 4th, 2025): I was actually able to speak to the head of strategy for Mr.Beast and surprisingly enough (or not surprisingly) this is exactly what they are doing and what makes their content so successful - they look for outliers in mass amounts of data - finding videos that genuinely spark viewers’ interest, even if it’s in a different domain - think a Minecraft simulation with 100 players on each island (male/female) and recreating that with actual human participants.

Comment analytics revealing audience engagement patterns and content resonance across different demographics

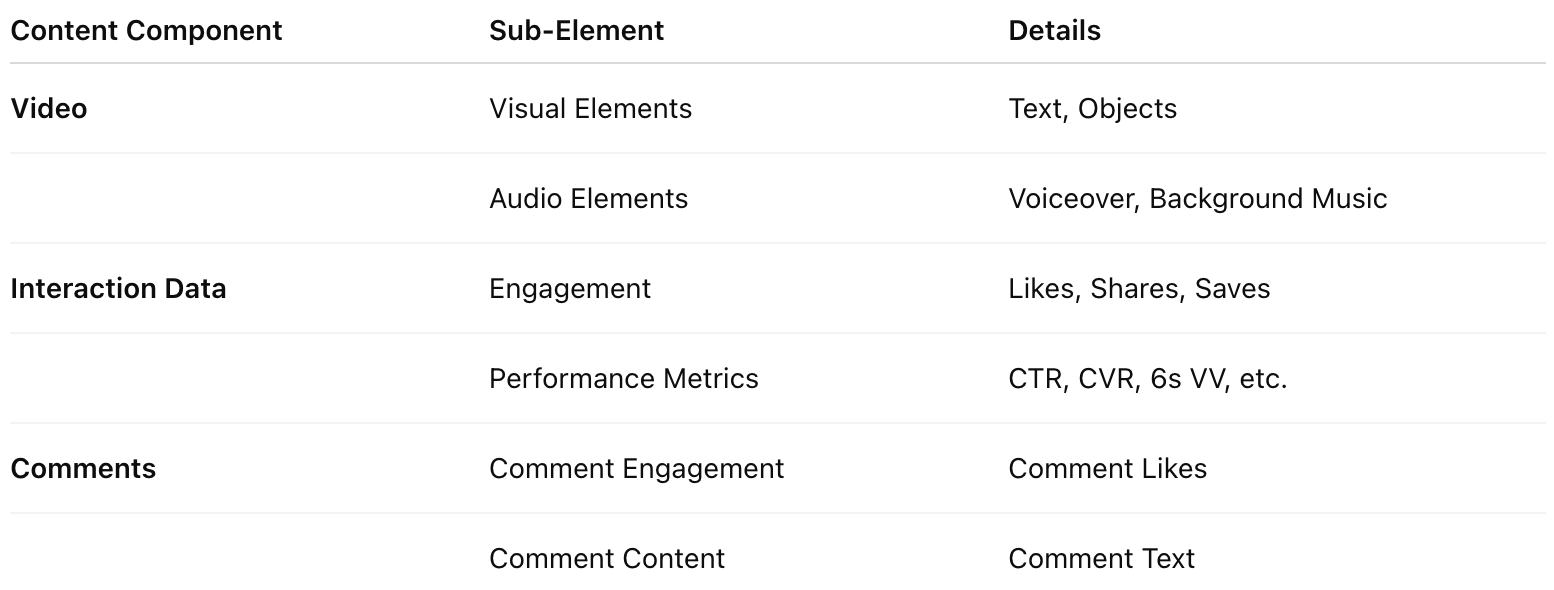

Content structure breakdown: How platforms organize multimodal content across video, interaction data, and comments

Let’s touch on how, as we can see in this breakdown, what gets abstracted away to just tabular blobs of text contains much information on how information is presented and interacted with. The visual elements and text within the video grab a user’s attention, while the audio provides voiceover narrating the message (or sometimes relevant background music). The titles and description provide detailed information on the video, while the comments section—something often overlooked in current processing workflows—presents a goldmine of user interaction and feedback.

The primary comments act as tier 1 opinions, with responses and likes serving as interaction trackers. If users have the same opinion, they’ll usually hit the like button instead of posting the exact same thing again. By linking the content of the video to actual interactions (both tier 1 and tier 2), we get a polling of audience feedback that is timely and beyond anything we can do in the same amount of time with surveys or really any other kind of data collection.

AI isn’t becoming creative—it’s becoming the ultimate creative research assistant. While generative AI struggles to produce truly fresh perspectives, it excels at helping us understand information and generate new insights that lead to genuinely creative work.

What Constitutes Creative Work?

To understand AI’s role in creativity, we need to establish clear boundaries around what constitutes creative work. Consider the common practice of taking screenshots from viral videos and adding captions from popular comments. While this involves some editing, it’s essentially sophisticated copying that accelerates content diffusion while reducing the economic returns of original creation—what economist Schumpeter called “creative destruction” in reverse.

Understanding how platforms structure multimodal content helps us see the complexity involved in creative work.

Real creativity is about choosing a unique perspective. Content with contrast or conflict naturally captures our attention—think of viral TikToks that expose workplace absurdities or Twitter threads that challenge conventional wisdom. But thoughtful, empathetic content is equally creative: the YouTube essayist who helps you understand your own anxiety, or the LinkedIn post that perfectly articulates what you’ve been feeling about remote work.

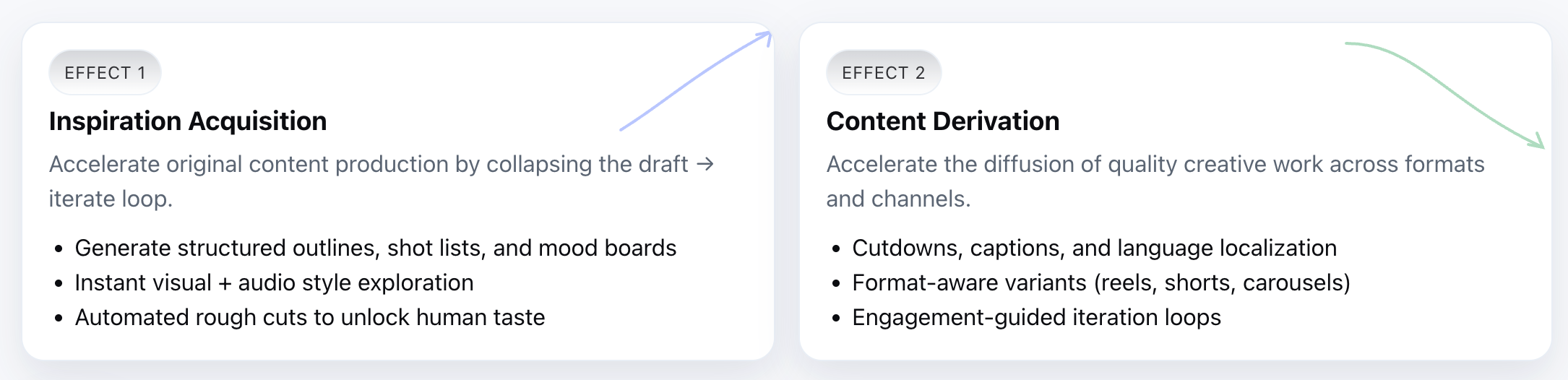

Here’s how I think about the creative ecosystem: there’s production (generating new content) and diffusion (deriving from or spreading existing content). AI’s sweet spot isn’t in either category alone—it’s in helping us understand massive amounts of information to find genuinely new angles and insights.

Right now, creating professional-quality video requires hours of shooting, editing, and post-production. As AI gets better at handling video, audio, and text together, we’re heading toward a world where that same video could be produced in minutes. This changes everything about the creative economy, making tools that help you find new inspiration increasingly valuable. Through this creative assistance, we can achieve two main effects:

Two primary effects of AI creative assistance: Inspiration acquisition and content derivation

- Inspiration acquisition: Accelerating original content production by collapsing the draft → iterate loop

- Content derivation: Accelerating the diffusion of quality creative work across formats and channels

Content Understanding for Enhanced Generation

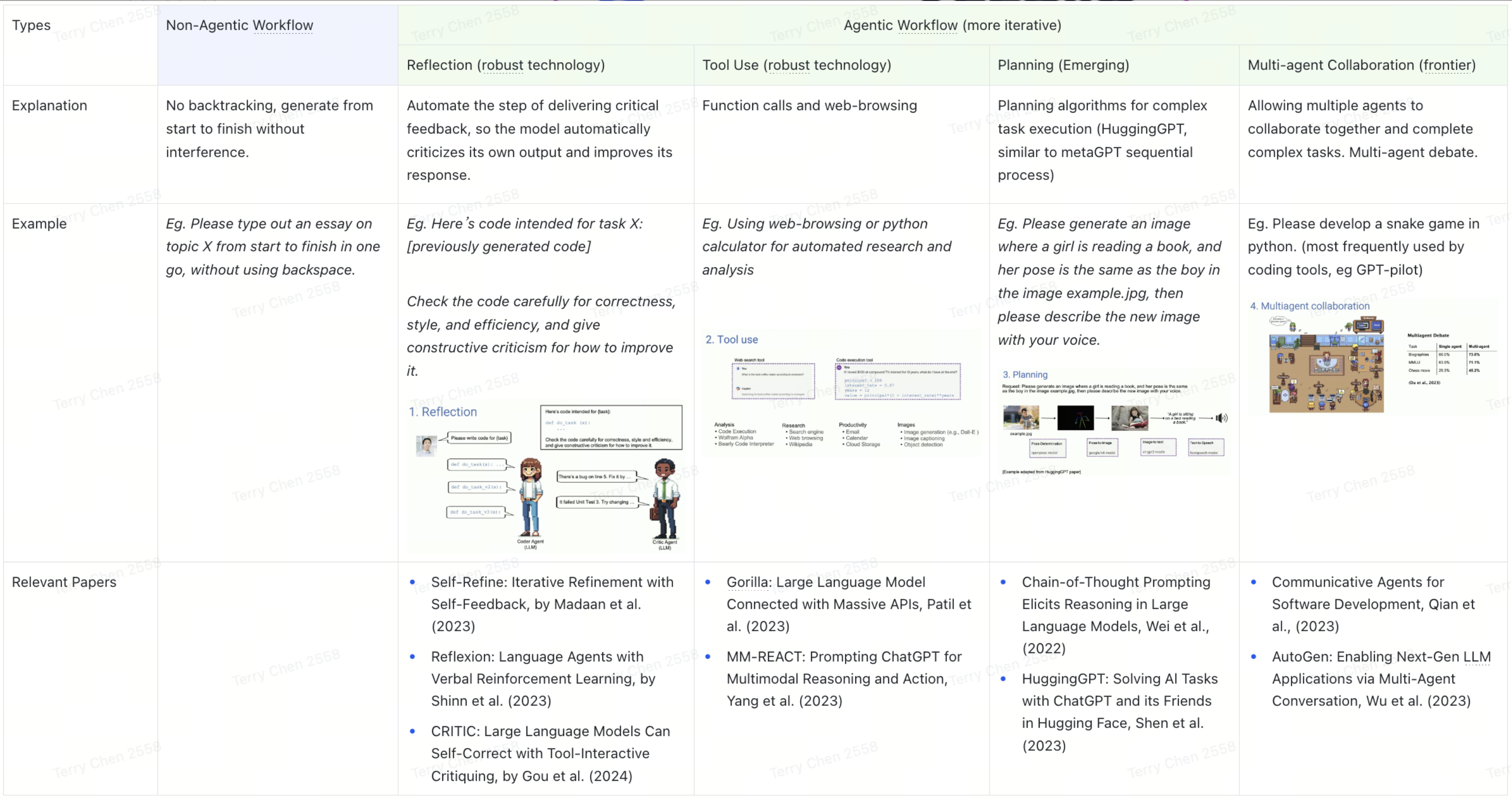

How can we make language models produce outputs that meet our expectations? This challenge breaks down into two distinct problems: (1) we don’t know what our ideal output looks like, and (2) we know what we want, but the language model doesn’t understand us.

Most teams focus on the second problem through a toolkit of techniques: model alignment (training AI to follow human preferences), prompting (crafting better instructions), few-shot learning (training AI with just a few examples), retrieval-augmented generation or RAG (helping AI access specific databases), fine-tuning (customizing AI for specific tasks), and memory systems (helping AI remember context across conversations). But companies are rapidly commoditizing these approaches—many solutions are open-sourced, which explains why so many generative products deliver roughly comparable results.

Different types of AI workflows: From basic generation to iterative collaboration

The real differentiation lies in how we adapt engineering and data processing to specific business scenarios—and more importantly, in solving the first problem: helping users understand what they actually want to create.

August 2025: Recently I was asked about what the role of a PM in building technical products stands, especially given how much of the model side work is handled by technical teams. I think the answer, in short, is understanding of the product, and making sense of data in relation to how they are communicated and what they express. One may deal with numbers, but it’s harder to actually understand the people that make up those numbers. In the concrete example of multimodal content understanding, it’s being able to preserve the very granularity that makes this data so valuable, and proposing technical solutions—modality alignment, weighted clustering, agent triage, content rewrite, etc.

Brand Understanding and AI Integration

Brand intelligence platform: AI systems learning to understand brand voice, visual identity, and content guidelines for contextual generation

The evolution toward brand-aware AI represents a significant shift in content generation capabilities. Instead of producing generic output that requires extensive human editing, these systems can understand context—what works for a luxury brand versus a startup, what tone resonates with different demographics, what visual styles align with brand guidelines.

November 2025: I saw the recent release of Google Pomelli and I think this is a great example of how a general purpose technology moves from research and public beta to a more grounded and applied case that delivers actual time saving and value. Like TypeFace, it’s essentially creating a brand kit to free users from prompting repeatedly, and often times not knowing how to most effectively describe their style.

AI brand training interface: How multimodal brand kits teach AI systems to generate content that aligns with specific brand requirements

The training process involves feeding AI systems examples of successful brand content across multiple modalities—text, images, videos, and audio. The system learns not just what the brand says, but how it says it, what visual elements it uses, and what emotional tone it maintains. This creates AI that can generate content that feels authentically on-brand without constant human oversight.

Returning to the first problem—“I don’t know what output I want”—this stems from a lack of content understanding. Good script writing requires more than just hooks (“You won’t believe what happens next”), unique selling propositions (USPs), and calls-to-action (CTAs)—it needs a clear angle: content that resonates with the audience, fits the context, and achieves its purpose.

Some products are building brand kits or audience profiles to guide more specific content generation through manually defined style rules or user personas. While these types of configurations will probably become standard, the real breakthrough would be connecting insight data with generation without requiring manual setup every time.

Understanding User Needs

Looking at the creative technology landscape, every category—ad aggregation, competitor tracking, brand insights, performance analysis, content generation—has 3-4 companies offering basically the same thing. The data products feel traditional, while the AI products often just add ChatGPT integrations to existing workflows.

The real opportunity lies in acquiring more granular data and creating smoother interactions. Instead of isolated tools, imagine connecting the entire creative production process where you can participate and adjust at each stage—from initial research through final publication.

Here’s a simple way to think about product value: user value = new experience - old experience - replacement cost. Most products built on foundational language models with minor tweaks deliver limited incremental value. Users still need to craft personalized prompts, and outputs almost always require multiple rounds of editing before they’re usable.

So how do we increase incremental value? The answer isn’t just better AI—it’s better workflows.

User-Friendly Workflows

Currently, creators mostly call upon individual capabilities or data, but single capabilities are insufficient for full-process script/video generation. Building workflows can help users connect various AI capabilities, reducing friction between tool switches.

The concept of “workflows, not skills” addresses user needs: many users currently need 5-10 AI capabilities to complete their creative work, with most capabilities being disconnected and requiring frequent switching. By establishing a clear workflow, users can more efficiently call upon relevant tools to complete their creative work.

I used to think that simply connecting AI capabilities constitutes a workflow, but that’s like saying a toolbox is the same as knowing how to build a house. What we call “Language UI” is actually “Prompt UI”—it differs from true language interaction by missing the context and shared understanding present in human conversation.

Think about the difference: you can tell a colleague “make this more engaging” and they understand your brand, audience, and context. With ChatGPT, you need to write a novel-length prompt every single time explaining who you are, what you’re building, and what “engaging” means in your specific context.

The future workflow tools will have human-like elements—they’ll ask follow-up questions, remember previous conversations, and understand your specific goals without you having to explain everything from scratch. Current prompting is probably transitional; eventually, we’ll eliminate the need for context-heavy prompts by building AI that understands your context and generates appropriate guidance automatically.

Multimodal Interaction and Content Ecosystem

Finally, let’s discuss modality. Given the characteristics of different modalities (text - easily editable, images - non-linear, video - linear), different scenarios should use different modalities. The same user may need different interactions in different contexts.

Understanding Content Through Data Visualization

The first layer of multimodal content understanding goes beyond traditional analytics. Rather than just tracking views and likes, the most interesting insights come from clustering comments and opinion spread by category—product feedback, creator engagement, emotional responses. This granular analysis reveals patterns in audience sentiment and helps creators understand not just what performs well, but why it resonates with different audience segments.

Vector visualization analysis: AI-powered semantic mapping revealing hidden relationships between content themes and audience preferences

Brand intelligence extraction: Analyzing fine-grained insights from audience feedback and engagement patterns

But the real magic happens in semantic analysis. Vector embeddings can reveal hidden relationships between content themes that humans might miss. For example, videos about “productivity tips” might cluster surprisingly close to “cooking tutorials” because both satisfy the same underlying need for life optimization. This kind of insight helps creators find unexpected angles and untapped niches.

Content performance metrics: Comprehensive analysis tracking engagement patterns, reach optimization, and conversion effectiveness across content types

The final piece is comprehensive performance analysis that connects creative decisions to business outcomes. This isn’t just about vanity metrics—it’s about understanding which content patterns lead to sustainable audience growth, higher conversion rates, and long-term creator success. When you can see these patterns clearly, you can make more informed creative decisions.

Switching between modal forms (long/short/mixed) and modal types (text/image/audio/video) will become easier, essentially providing the same content with applicability across different scenarios. Users aren’t just people; they’re collections of needs. For instance, I might read text at the office due to setting constraints, watch videos while waiting in line with nothing to do, and listen to audio while driving or commuting. The same content may need three modalities (text/video/audio) connected based on the scenario. This could be further refined - people accelerate reading or listening for higher information intake. Finding ways to adapt the same content to different scenarios without increasing creation costs is another interesting challenge.

Case Study: Voice Synthesis

Take voice synthesis as an example. Technically, this technology is already quite mature—you can clone a voice with just a few minutes of audio. Yet when most people think about AI voice cloning, they imagine phone scams. Sure, there are fun projects like AI David Attenborough narrating random videos, or OpenAI’s GPT-4o launch event that briefly simulated Samantha’s voice from “Her.” But the most creative use I’ve seen comes from short video creators.

I recently discovered a creator called “Yi Tou Jue Lv” who makes derivative content based on “In the Name of the People” (a 2017 Chinese political drama). Their videos consistently get 500K+ views by doing something brilliant: they take original footage but replace all the narration with AI-synthesized character voices speaking internal monologues and psychological commentary. The result feels like getting inside the characters’ heads in a way the original show never offered.

What makes this work is the creator’s deep understanding of the characters combined with AI’s ability to generate consistent, high-quality voice synthesis. They’re not just copying—they’re creating a completely new layer of interpretation that audiences can’t get anywhere else.

Contrasting Audio & Text

It’s fascinating how differently our brains process audio and text. When we read, we’re essentially interacting with a graphical user interface—scanning, jumping between sections, processing information at our own pace. We’ve evolved sophisticated tools for text: highlighting, bookmarking, section headers, and search functions. Yet despite these advantages, text can feel less engaging than a good conversation.

Audio interfaces evolution: From podcast consumption (left) to social audio saving (right)—platforms adapting to our increasingly audio-first content behaviors and the need to preserve valuable conversations

Speaking, in contrast, is inherently linear and social. There’s something about the human voice that keeps us present—the subtle shifts in tone, the natural pauses, the back-and-forth rhythm. It’s why we can stay engaged in a podcast while walking (and multitask), yet reading typically demands our full attention.

This contrast reveals something deeper about how we process information. Text excels at conveying complex ideas—we can revisit difficult passages, cross-reference concepts, and process at our own speed. Audio shines in maintaining engagement and conveying emotion, even if the content itself is relatively simple. Perhaps the future lies not in choosing between these mediums, but in finding ways to combine their strengths. Imagine an interface that preserves the natural flow of conversation while adding the structural advantages of text—where you could navigate both temporally and conceptually, maintaining both engagement and comprehension.

Conclusion

Roland Barthes' "Death of the Author": When content is created, interpretation rights transfer to the audience

As Roland Barthes suggested with “The Death of the Author,” once content is created, interpretation rights transfer to the audience. We see this everywhere today—YouTube channels that analyze every Marvel movie, TikTok accounts that remix old TV shows, podcast networks that dissect every episode of popular series.

With improvements in AI voice synthesis, character generation, and content manipulation, we’re approaching a future where derivative works based on original intellectual properties can achieve professional quality while satisfying different interpretations and imaginations. The “Yi Tou Jue Lv” example I mentioned earlier is just the beginning.

These perspectives might all exist in the original work, but each remix offers a different angle, providing audiences with unique experiences. There’s still massive amounts of content that people want to see but isn’t available on any platform. Maybe creativity’s next evolution isn’t about generating entirely new content—it’s about intelligently remixing and reinterpreting what already exists to better satisfy what audiences actually want.

Final Thoughts

While generative AI capabilities evolve rapidly, human nature changes slowly. We overestimate technology’s short-term creative impact (AI won’t replace human creativity next year), but underestimate how fundamentally it will change creative workflows (it’s already happening in ways we’re only beginning to understand).

Making probabilistic models truly creative remains challenging yet fascinating work. The future lies not in AI replacing human creativity, but in building systems that amplify our ability to understand, synthesize, and create meaning from the infinite streams of content around us. That’s the creative challenge I’ll continue working on.

Appendix

This article was originally developed as a presentation and shared internally with TikTok team members during my time there. The content has been adapted for public publication and adjusted to remove potentially sensitive information while preserving the core insights about AI and creativity.

All views expressed in this article are my own and do not represent the official positions or strategies of TikTok or any other organization.

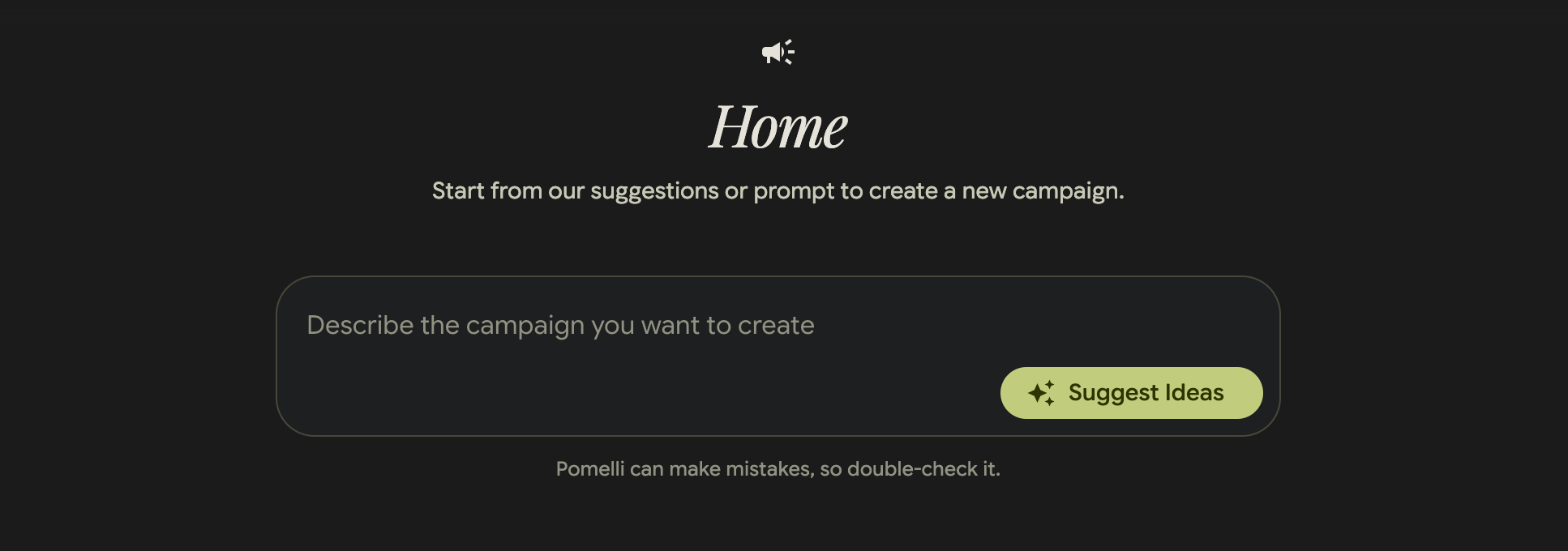

Pomelli’s landing page showcases a visually appealing interface for generating on-brand content, positioned as “Google Labs” experimental project for business content creation.

Pomelli’s landing page showcases a visually appealing interface for generating on-brand content, positioned as “Google Labs” experimental project for business content creation. The campaign creation interface emphasizes simplicity with a central prompt area and “Suggest Ideas” functionality, but the disclaimer “Pomelli can make mistakes, so double-check it” reveals underlying reliability concerns.

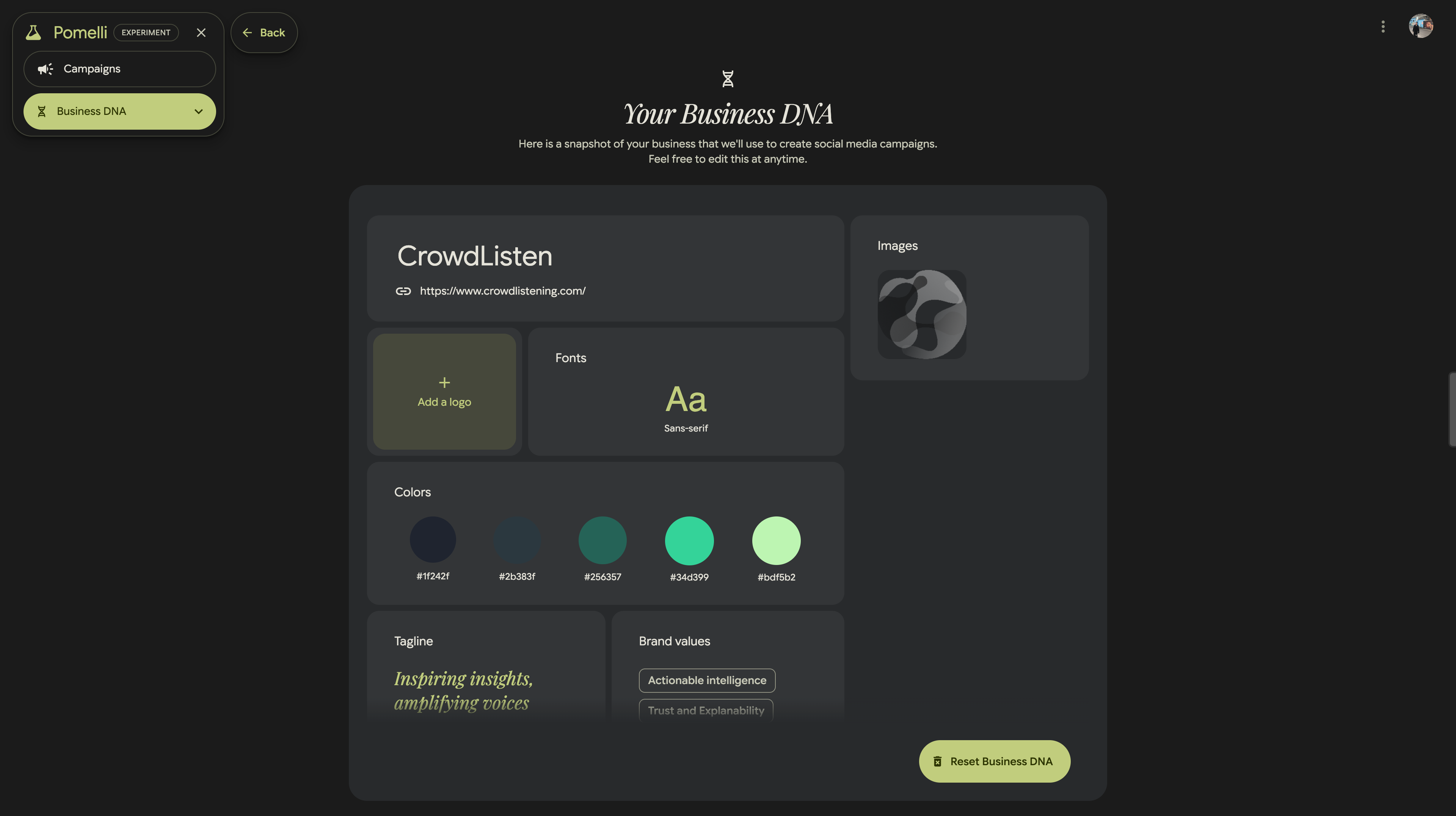

The campaign creation interface emphasizes simplicity with a central prompt area and “Suggest Ideas” functionality, but the disclaimer “Pomelli can make mistakes, so double-check it” reveals underlying reliability concerns. The “Business DNA” setup process captures comprehensive brand information including logos, fonts, color palettes, taglines, and brand values, demonstrating a systematic approach to brand identity integration.

The “Business DNA” setup process captures comprehensive brand information including logos, fonts, color palettes, taglines, and brand values, demonstrating a systematic approach to brand identity integration.